Scaling a system inside a growing project is rarely about “making it faster.” It is about keeping promises while traffic, data volume, and team size change at different speeds. The real risk is not a dramatic collapse; it is a slow drift into unreliable delivery and hidden fragility that only appears when change is needed most.

In smaller projects, scaling mistakes often feel like “a rough week.” In larger systems, the same mistake can become a repeating incident pattern, a costly rework, or a trust problem between teams and stakeholders. If you are in this situation, the goal is not to predict every failure. It is to surface reversible vs irreversible decisions and reduce the number of surprises.

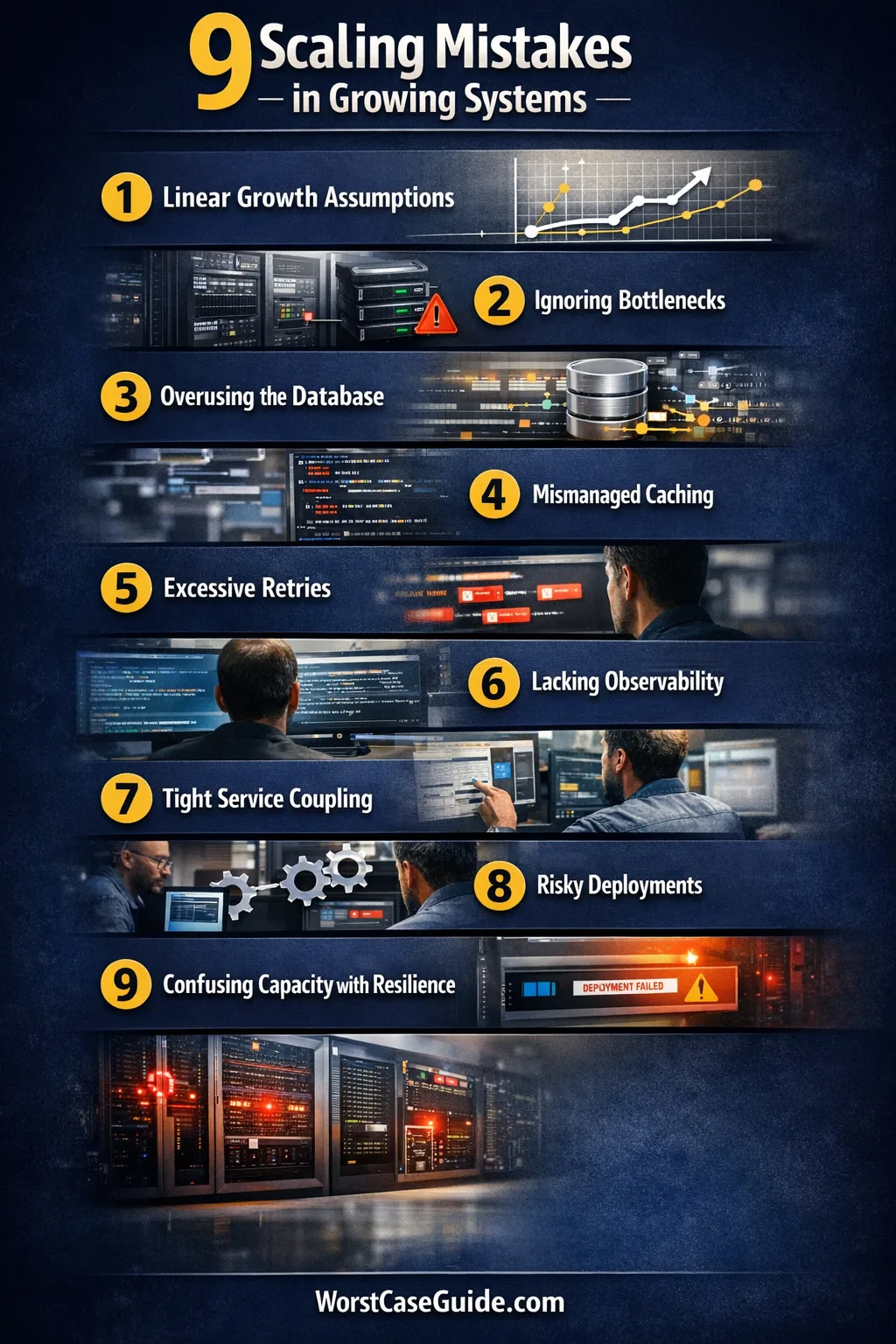

Why System Scaling Gets Risky In Growing Projects

Growth creates mismatched constraints. Usage may double while headcount stays flat; features may multiply while the operational maturity stays the same; a new customer segment may bring different traffic shapes than the original plan. Scaling then becomes less about “capacity” and more about coordination and predictability.

The worst-case outcome is usually a chain reaction: a small performance issue triggers a workaround, the workaround creates new coupling, and the coupling turns routine deployments into high-risk events. The project still “works,” but each change carries unclear blast radius and rising operational overhead.

What “scaling” often includes (without calling it that): more endpoints, more background jobs, more integrations, more environments, and more people touching production. Each one increases surface area, even if traffic stays the same.

Common Wrong Assumptions That Make Scaling Harder

- “We will optimize later.” Later often arrives as incident time, when the system is least safe to change.

- “More servers fixes it.” Some bottlenecks are single-threaded, stateful, or limited by shared dependencies.

- “The database is fine; it’s proven tech.” The risk is usually schema growth and query shape, not the brand name.

- “We just need caching.” Caching can add staleness, invalidation complexity, and new failure modes.

- “Observability can wait.” Waiting turns unknowns into guesswork and extends time-to-recover.

System Scaling Mistakes In Growing Projects

Mistake 1: Treating Growth As A Linear Problem

Scaling pressure often shows up as “just a bit slower.” It is easy to assume the system will behave the same way at 2× load, only with bigger numbers. In reality, many systems have threshold effects: queue depth changes latency, retries amplify traffic, and a small hotspot becomes dominant.

Why It Happens

Early success creates a mental model where current architecture feels “good enough.” Teams also plan with average traffic, while real systems fail on peak patterns and bursts.

Early Warning Signs

- Latency is stable most days, then spikes during predictable events.

- Error rates rise only after a specific threshold (not gradually).

- “It’s fine in staging” becomes a recurring phrase, because staging lacks production shape.

Worst-Case Outcome (Without Drama)

Decisions based on linear thinking can lock a project into reactive scaling: adding capacity, adding retries, adding quick patches. The system stays online, yet each growth step increases variance and reduces predictability, making planning and shipping harder.

A Safer Approach

It often helps to track percentiles (not averages) and to describe growth using load shapes: bursts, fan-out, long tails, and batch windows. If you are in a smaller project, a lightweight load test can be enough. In larger systems, capacity models and failure injection can reduce surprises.

Mistake 2: Scaling The App While Ignoring The Bottleneck Map

Teams often scale what they control most easily: application servers, containers, or service replicas. If the constraint lives elsewhere—database write capacity, external APIs, shared caches, or a single queue—this creates a false sense of progress.

Why It Happens

It is natural to interpret scaling as “more of the same.” The missing piece is a bottleneck map: a shared view of where contention can occur and which dependencies have hard limits.

Early Warning Signs

- Adding replicas improves throughput, but latency still climbs during peaks.

- Incidents correlate with a single dependency (DB, queue, third-party), not with deployments.

- Different teams report “the system is slow,” yet each team measures a different part of the path.

Worst-Case Outcome (Without Drama)

Scaling the wrong layer can turn a constrained dependency into a traffic amplifier: more app nodes generate more calls, more retries, and more contention. That raises operational load and produces incidents that feel “random,” even though the pattern is structural.

A Safer Approach

A bottleneck map can start simple: request path, dependencies, and the top three constraints. For smaller projects, a basic list is often enough. For larger systems, it can include rate limits, connection pools, and shared state. The goal is clarity, not perfection.

| Symptom | Often Points To | First Thing To Verify |

|---|---|---|

| Latency spikes with stable CPU | dependency waits or lock contention | DB slow queries, connection pool saturation |

| Error bursts after timeouts | retry storms or thundering herd | Retry policy, backoff, circuit breakers |

| Throughput flatlines | single-thread limits or serialization points | Locks, queues, shared caches, critical sections |

| “Works in staging” | different load shape or missing data volume | Dataset size, concurrency, peak simulation |

Mistake 3: Letting The Database Become The Default Integration Layer

As projects grow, it can be tempting to use the database as the shared language for everything: new features, analytics, background jobs, and integrations. This centralizes value, yet it also centralizes risk, especially when write patterns and schema coupling expand.

Why It Happens

Databases feel reliable and familiar. They provide transactions, a single source of truth, and quick iteration. Over time, teams add “just one more table” until multiple services rely on shared joins and implicit contracts.

Early Warning Signs

- Schema changes require cross-team coordination and careful timing.

- Read queries multiply and become hard to explain or hard to cache.

- Small features cause migration anxiety because rollback is uncertain.

Worst-Case Outcome (Without Drama)

The database turns into a deployment gate. Teams slow down not because they lack ideas, but because each change has wide blast radius. Incidents can become longer because recovery requires data fixes rather than simple service restarts.

A Safer Approach

A safer pattern is to keep the database as a persistence layer, not a universal interface. That can mean clearer boundaries, stable schemas, and explicit APIs or events for cross-domain needs. In smaller projects, even a simple rule like “no cross-service joins” can reduce coupling and protect change velocity.

Mistake 4: Adding Caches Without Owning Staleness And Invalidation

Caching is often introduced as a performance fix. In growing projects, it becomes a data correctness problem: cached results can be stale, invalidation can be incomplete, and “temporary” TTLs can become permanent assumptions.

Why It Happens

Caches are attractive because they are fast to add and easy to measure in hit rates. What is harder is agreeing on freshness, consistency, and which user actions must reflect updates immediately.

Early Warning Signs

- Bug reports describe “it fixes itself later,” indicating stale reads.

- Cache keys proliferate and become hard to reason about.

- Teams avoid touching the cache layer because changes feel risky and unpredictable.

Worst-Case Outcome (Without Drama)

The system can split into two realities: the real data and the cached data. That creates subtle user trust issues, plus operational risk when invalidation causes cache stampedes or unexpected load spikes.

A Safer Approach

It is often safer to define cache behavior in product terms: “This page can be stale for X seconds,” or “This action must update within one request.” From there, caching choices can be tied to freshness budgets and invalidation ownership, rather than added as a universal performance patch.

Mistake 5: Relying On Retries As A Reliability Strategy

Retries can make individual requests succeed more often. In growing systems, retries can also multiply traffic at the worst time—when a dependency is already degraded. If you are in a high-concurrency situation, retries can behave like load accelerators, not safety nets.

Why It Happens

Retries feel harmless because they are simple and local. The missing layer is a shared understanding of backoff, idempotency, and what happens when many clients retry at once.

Early Warning Signs

- During incidents, inbound traffic stays flat but downstream calls jump.

- Timeouts cluster, and recovery is slower than expected.

- Log volume surges because retries create duplicate error traces and noise.

Worst-Case Outcome (Without Drama)

Instead of graceful degradation, the system can enter a self-sustaining overload where retries keep pressure high. Teams then respond by increasing timeouts or adding more retries, which further reduces recovery margin and makes failures longer.

A Safer Approach

A safer approach often includes bounded retries, exponential backoff, and patterns like circuit breakers so failure stops propagating. In smaller projects, even documenting retry rules can prevent inconsistent behavior. In larger systems, aligning on idempotent operations reduces accidental duplication.

Mistake 6: Scaling Features Faster Than Observability

As complexity grows, “it feels slower” stops being actionable. Without good signals, teams can chase the wrong fix, argue about anecdotes, and miss the actual failure point. Observability is not only logs; it is the ability to explain behavior under change.

Why It Happens

Instrumentation often competes with feature delivery. It can be framed as optional work until the first serious incident. Then the team needs traces, dashboards, and meaningful alerts immediately—when the system is already unstable.

Early Warning Signs

- Incidents start with “we can’t reproduce it,” and end with “we are not sure what fixed it.”

- Dashboards exist, yet they are not tied to user outcomes or service objectives.

- Alerts trigger often but do not help triage, creating alert fatigue and ignored signals.

Worst-Case Outcome (Without Drama)

Teams spend more time in diagnosis than in prevention. Mean time to recover grows, not because engineers lack skill, but because the system lacks explainability. Over time, this can reduce confidence in shipping changes and increase release friction.

A Safer Approach

Many teams find it safer to define a small set of golden signals (latency, errors, saturation, traffic) and connect them to user journeys. If you are in early-stage growth, consistent structured logs can be a big step. In larger systems, distributed tracing and alert rules aligned with service objectives reduce guesswork.

Mistake 7: Building Tight Coupling Between Services And Teams

As projects scale, teams often split into services. The risk is that the split is only organizational while the system stays tightly coupled through shared schemas, synchronous calls, and implicit dependencies. The result can be “microservices with monolith behavior.”

Why It Happens

Speed pressure pushes teams to reuse internal details. It feels efficient to call another service synchronously and expect a precise response. Over time, that creates dependency chains and shared failure across services that were meant to be independent.

Early Warning Signs

- A single deployment requires multiple teams to coordinate timing.

- Incidents spread across services in cascade patterns.

- APIs lack versioning, and “just one field change” becomes high risk.

Worst-Case Outcome (Without Drama)

Coupling can turn growth into a coordination tax. The system may still scale in capacity, yet the organization stops scaling in delivery. Releases become slower, and teams spend more time on alignment meetings than on solving customer problems.

A Safer Approach

Many projects benefit from making dependencies explicit: API contracts, versioning, and agreed expectations for latency and failure. In smaller projects, a single “contract doc” per dependency can reduce implicit assumptions. In larger systems, asynchronous patterns and bulkheads can reduce cascade risk while preserving team autonomy.

Mistake 8: Letting Deployments Become The Riskiest Part Of The System

Scaling is not only runtime behavior. It is also how safely change moves through the system. In growing projects, deployments can become fragile due to manual steps, unclear rollback, and big-bang releases that bundle too many changes together.

Why It Happens

Teams often prioritize feature output and accept deployment friction as “temporary.” Over time, temporary becomes normal. Without deliberate investment, release processes can lag behind system complexity and turn every change into a high-stakes event.

Early Warning Signs

- Deployments happen less often because they feel unsafe or exhausting.

- Rollbacks are rare because they are unclear or incomplete.

- Incidents correlate with releases, not with traffic peaks.

Worst-Case Outcome (Without Drama)

Release friction can lock the project into large batches, which increases defect size and slows detection. The system may remain functional, yet the organization loses iteration speed and starts to ship less confidently, with more after-hours fixes and growing operational stress.

A Safer Approach

Safer deployment tends to look like smaller changes, clearer rollback paths, and progressive delivery (canaries, staged rollouts) where possible. In smaller projects, even a consistent checklist can reduce variance. In larger systems, automated verification and feature flags can reduce the need for high-risk releases while keeping change reversible.

Mistake 9: Confusing “More Capacity” With “More Resilience”

Adding capacity can reduce saturation. It does not automatically add resilience. Resilience is about how the system behaves when parts fail: graceful degradation, isolation, and clear recovery paths. Without those, a bigger system can be a bigger failure surface.

Why It Happens

Capacity is visible: more instances, more throughput, fewer CPU alerts. Resilience is less visible until something breaks. Teams may assume resilience exists because the system has stayed up so far, even though it has not faced realistic fault scenarios at the new scale.

Early Warning Signs

- Incidents require heroic coordination rather than clear runbooks.

- Small dependency outages create wide impact instead of localized degradation.

- Post-incident actions focus on “add more resources” without addressing failure behavior.

Worst-Case Outcome (Without Drama)

The system can become a machine that works only in ideal conditions. When a dependency slows down, the system responds with pile-ups and timeouts rather than controlled shedding of work. That increases recovery time and forces teams into repeated “all-hands” responses, which is hard to sustain.

A Safer Approach

Resilience often improves when systems have explicit degradation modes (read-only, reduced features, queued writes), timeouts that are aligned across services, and recovery procedures that are tested under controlled conditions. In smaller projects, a single “what happens if this dependency is slow?” review can surface hidden coupling. In larger systems, regular game days can turn resilience into a practiced capability rather than a hope.

Risk Patterns That Repeat Across Most Scaling Failures

Most scaling problems differ in details, yet the underlying patterns are often the same. Seeing the pattern early can prevent a string of small “reasonable” decisions from turning into structural risk. The items below are less about blame and more about prediction.

- Hidden shared state that becomes a bottleneck as concurrency grows.

- Implicit contracts between teams that break during fast iteration.

- Work amplification (retries, fan-out) that multiplies load under stress.

- Unowned “grey areas” where no team owns the end-to-end path.

- Rollback uncertainty that turns deployments into risk events.

- Measurement gaps where decisions rely on anecdotes instead of signals.

A practical way to use this list: If two or three mistakes feel “close” to your situation, it may be a sign that the project is approaching a scaling inflection point. That is often the moment when small investments in clarity and reversibility can prevent much larger rework later.

FAQ

How can a team tell whether it has a scaling problem or just a temporary spike?

Temporary spikes usually have a clear trigger and a clear end. Scaling problems show up as repeated spikes that grow in frequency, or as rising latency even when traffic looks “normal.” A useful clue is whether the system returns to a stable baseline without manual intervention or workarounds.

What is the most common “silent” scaling mistake?

One common silent mistake is unmeasured coupling: services depend on each other in ways that are not documented or monitored. It can feel fine until a small change increases call volume or introduces latency, then the impact spreads across multiple teams and becomes hard to localize.

Is caching always a good first step when performance degrades?

Caching can be helpful, yet it also introduces freshness decisions and invalidation risk. If the problem is a write bottleneck or lock contention, caching reads may not address the real constraint. It often helps to clarify what must be correct now versus what can be slightly stale.

Why do retries sometimes make outages worse?

Retries add extra work at the moment a dependency is already struggling. If many clients retry together, they can create traffic amplification and extend degraded periods. Bounded retries with backoff and clear failure limits tend to reduce that risk.

What tends to change first as a project grows: performance, reliability, or delivery speed?

Delivery speed often changes first, because coordination and deployment complexity rise with surface area. Performance issues may still be intermittent, and reliability may look “fine” until a threshold is crossed. Watching for release friction and rollback uncertainty can reveal scaling pressure early, before it becomes a runtime crisis.

How can teams reduce scaling risk without slowing feature delivery too much?

Teams often get leverage from small, targeted steps: agreeing on golden signals, documenting a bottleneck map, and keeping changes more reversible. These choices do not require predicting the future; they reduce uncertainty so feature work stays safer as the system grows.