Vendor selection can look like a procurement task, but the real risk often sits in operations: handoffs, uptime, support, and what happens when reality diverges from the sales demo. Most disruptions do not come from a single dramatic failure. They come from small assumptions that stay untested until the vendor is already embedded in daily work, and the switching cost becomes visible.

Operational disruption is rarely “the vendor is bad.” It is more often “the fit is unclear,” “the boundaries are fuzzy,” or “the plan for change is missing.” The goal is simple: spot the avoidable mistakes early, so the vendor relationship stays a tool rather than a dependency.

Why Vendor Selection Can Disrupt Operations

Vendors change the shape of work. They introduce new interfaces (people, systems, processes), new timelines (release cycles, lead times), and new failure modes (support queues, outages, supply delays). If selection focuses on features and price while ignoring operational fit, the “implementation phase” becomes a permanent firefighting phase.

Operational disruption usually shows up as latency (work slows down), fragility (small issues cascade), or lock-in (changing course becomes expensive).

Common Wrong Assumptions

- “A good product means smooth operations.” Feature quality and operational reliability are different disciplines.

- “Support will be fine because the vendor says so.” Support outcomes depend on queue load, staffing, and escalation paths.

- “Integration is straightforward.” Integration is where hidden assumptions and ownership gaps surface.

- “We can switch later if needed.” Switching later often means migrating data, retraining teams, and reworking processes under time pressure.

- “The contract covers it.” Contracts help, but operational outcomes depend on governance, habits, and clarity in day-to-day work.

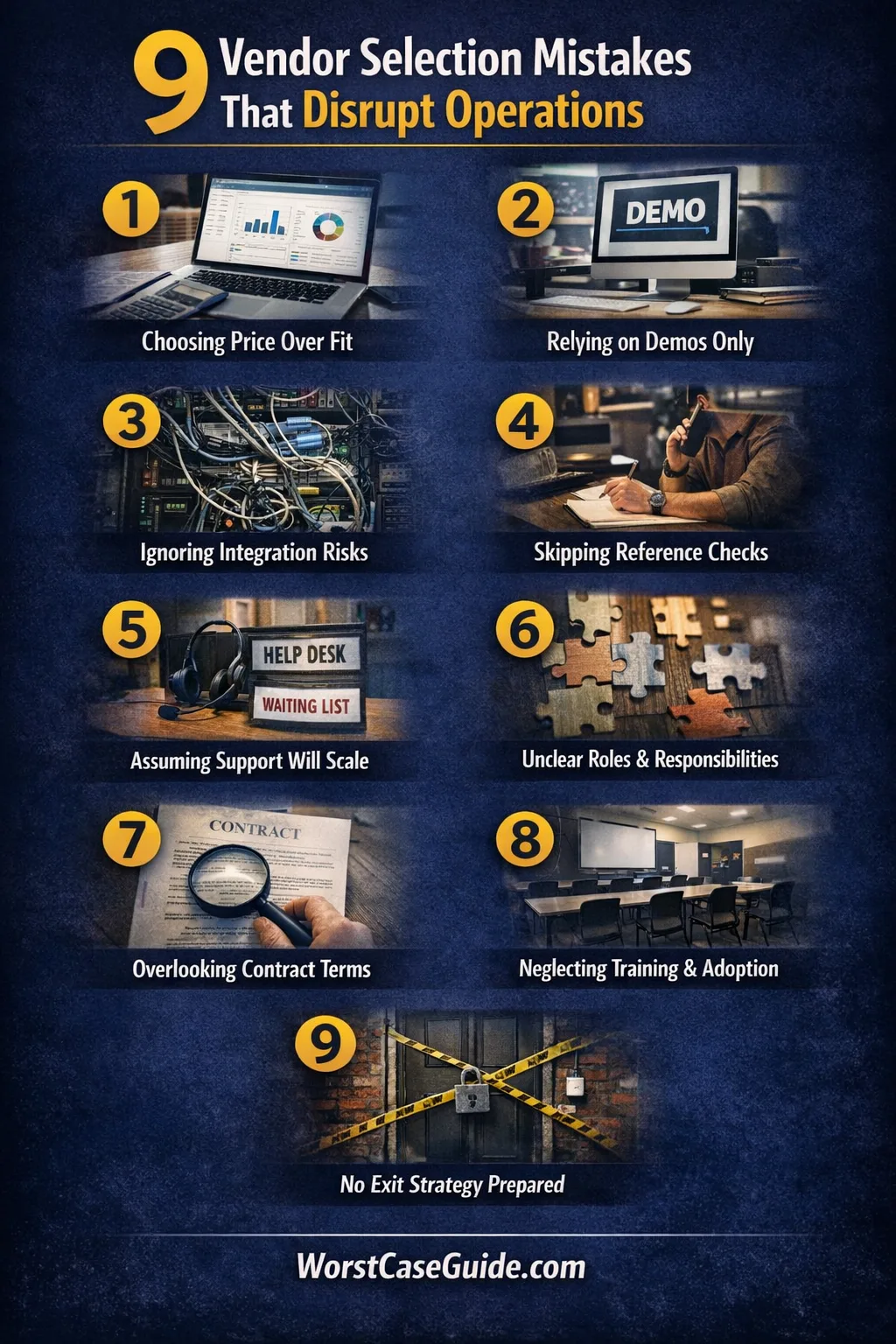

Vendor Selection Mistakes That Disrupt Operations

The mistakes below are written as operational failure patterns. Each one includes a realistic worst-case outcome (not exaggerated), along with a safer approach that tends to reduce disruption.

Mistake 1: Selecting For Price Or Features While Ignoring Operational Fit

Why It Happens

Selection conversations often reward what is easy to compare: price, feature checklists, and polished demos. Operational fit is harder to quantify because it lives in response times, handoffs, and how exceptions are handled when work gets messy.

Early Warning Signs

- Support and implementation are treated as “later,” not as selection criteria.

- Stakeholders who run day-to-day operations are not included in evaluation.

- Risk questions get vague answers like “we can figure it out.”

Worst-Case Result

The vendor becomes a bottleneck. Work slows, teams build workarounds, and small outages or delays compound into missed deadlines, rework, and customer-impacting incidents.

A Safer Approach

Some teams score vendors on a short “operational fit” set: support model, implementation ownership, incident handling, and maintenance overhead. If the vendor is part of a critical path, fit can matter as much as features.

Mistake 2: Skipping A Real Use-Case Pilot And Relying On Demos

Why It Happens

Demos are optimized for netlik. Real operations are optimized for throughput, exception handling, and edge cases. Without a pilot, the most important questions stay unanswered: what breaks, who fixes it, and how long it takes.

Early Warning Signs

- Pilot is labeled “nice to have” because of timeline pressure.

- Evaluation uses generic scenarios, not the team’s messiest workflows.

- No one is assigned to measure operational outcomes (cycle time, error rate, downtime impact).

Worst-Case Result

The go-live becomes the pilot. Failures appear under real load, causing interruptions, rushed fixes, and a fragile setup that requires constant manual intervention to keep running.

A Safer Approach

A small, time-boxed pilot can focus on one critical workflow and a few “known ugly” cases. If the vendor cannot support the pilot with clear ownership and fast feedback, that is often a useful signal.

Mistake 3: Treating “Integration” As A Checkbox Instead Of A Risk Map

Why It Happens

Integration is often described as “we have an API” or “we connect to X.” Operational risk sits in data ownership, sync frequency, permissions, and what happens when systems disagree.

Early Warning Signs

- Integration questions stop at “is it possible,” not “is it maintainable.”

- Error handling, retries, and monitoring are not discussed.

- There is no clear answer for “who owns data accuracy when systems conflict.”

Worst-Case Result

Teams lose trust in the system because data becomes inconsistent. Manual reconciliation grows, exceptions pile up, and critical workflows slow down or stop when sync failures appear.

A Safer Approach

Some evaluations build an “integration risk map”: systems touched, data fields, failure points, and owners. It often reveals hidden scope and helps compare vendors on real operational complexity.

Small systems may only need basic sync and a clear fallback. Larger environments often need monitoring, access controls, and well-defined error handling from day one.

Mistake 4: Not Checking References For Operational Reality

Why It Happens

Reference checks can become a formality: a quick call, a friendly customer, a positive story. Operational reality is more specific: downtime patterns, support responsiveness, implementation surprises, and what the vendor is like once the contract is signed.

Early Warning Signs

- References are only provided by the vendor, with no effort to find similar use cases.

- Questions focus on satisfaction, not on incidents and support.

- No one asks about the first 90 days of adoption.

Worst-Case Result

The organization discovers predictable problems too late: slow escalation, unstable releases, or frequent exceptions. The impact lands on operations as unplanned work and degraded service quality.

A Safer Approach

Reference checks tend to work better when they ask about specific events: major incident handling, support turnaround for urgent tickets, and how the vendor behaves under pressure. If the vendor is used in critical workflows, “a customer similar to us” is often more informative than “a happy customer.”

Mistake 5: Assuming Capacity And Support Will Scale With Your Needs

Why It Happens

Vendors may be strong at onboarding and sales, then weaker in steady-state operations. Support capacity depends on staffing, process maturity, and how the vendor prioritizes customers. Without clarity, the buyer assumes the vendor’s best-case support experience will continue.

Early Warning Signs

- Support model is described in broad terms, with no response and resolution expectations.

- Escalation paths depend on “knowing someone,” not a defined process.

- There is no clear plan for peak periods or incident coverage.

Worst-Case Result

When the first serious issue hits, the team waits in a queue while operations stall. Workarounds accumulate, and the organization accepts lower standards because restoring normal service becomes too slow.

A Safer Approach

Some teams define what “good enough support” looks like for their context: expected response time, clear escalation triggers, and a named owner on both sides. In high-criticality use cases, a vendor’s support structure can matter more than an extra feature.

Mistake 6: Leaving Roles And Decision Rights Unclear Between Teams And Vendor

Why It Happens

During selection, everyone wants speed. RACI and governance feel like paperwork. Once work starts, unclear decision rights create delays: who approves changes, who owns configuration, who can stop a release, and who decides when a problem is “vendor-side” versus “internal.” That ambiguity becomes an operational tax.

Early Warning Signs

- Ownership shifts between teams depending on the problem.

- Questions like “who signs off” and “who is on call” get uncertain answers.

- Vendor expects the buyer to manage key pieces without explicit resourcing.

Worst-Case Result

Incidents bounce between groups while the clock runs. Changes are delayed or applied inconsistently. The system becomes fragile because it depends on informal coordination and personal relationships rather than clear operating rules.

A Safer Approach

Even a lightweight governance setup can reduce disruption: named owners, decision triggers, and a simple escalation path. In smaller projects, this can be one page. In larger systems, it can be a standing operational rhythm with regular reviews.

Mistake 7: Overlooking Contract And Policy Details That Affect Day-To-Day Operations

Why It Happens

Contracts can be treated as a legal formality, separate from operations. Yet the fine print often defines operational realities: change windows, data access, incident reporting, audit rights, and how quickly services can be modified or ended. The risk is not legal complexity; it is operational constraints hiding in legal language.

Early Warning Signs

- Data ownership and access are discussed vaguely (“it’s your data”) without practical export steps.

- Service levels, maintenance windows, and incident communication are not aligned with operational needs.

- Termination and transition are considered “unlikely,” so they are not planned.

Worst-Case Result

A change is needed (scope shift, compliance need, exit), and the organization learns that it cannot move quickly. The vendor relationship becomes a constraint on operations, not a support. Teams work around it, increasing risk and complexity.

A Safer Approach

Some teams translate key contract points into an operational checklist: what happens in an incident, how data can be exported, how changes are approved, and what an orderly transition would look like. Where needed, a specialist review can help, since the goal is operational clarity rather than legal perfection.

Mistake 8: Not Planning Onboarding, Training, And Adoption As Operational Work

Why It Happens

Vendor selection can assume “teams will adapt.” Adoption is treated as a communication task, not operational engineering. In reality, onboarding changes habits, handoffs, and error rates. Without a plan, productivity dips are longer and hidden failure modes persist.

Early Warning Signs

- No dedicated time for training, sandboxing, or practice workflows.

- Success is defined as “system is live,” not “work is smooth.”

- Frontline teams are expected to discover new processes through trial and error.

Worst-Case Result

Operational load increases because users make predictable mistakes, revert to old tools, or build informal workarounds. The organization ends up with a hybrid process that is harder to monitor and easier to break, with inconsistent outcomes.

A Safer Approach

Adoption planning often works best when it includes: minimal viable training, clear “new normal” workflows, and a short period of heightened support. In smaller teams, this can be informal but still explicit. In larger organizations, it usually needs a defined rollout and feedback loop.

Mistake 9: Ignoring Exit And Continuity Planning Until It Is Too Late

Why It Happens

Exit planning can feel pessimistic, so it gets postponed. Continuity planning can feel abstract, so it is deferred. Yet operational resilience depends on knowing what happens if service degrades, if the vendor changes direction, or if your needs evolve beyond the vendor’s roadmap. This is less about distrust and more about reversibility.

Early Warning Signs

- No documented path for exporting data, configurations, and historical logs.

- There is no fallback workflow if the vendor system is down.

- Key knowledge sits with one administrator or one vendor contact, creating single points of failure.

Worst-Case Result

A disruption turns into prolonged operational damage because switching is slow and the fallback is unclear. Teams accept degraded service longer than they should, and the vendor relationship becomes a hard dependency with limited options.

A Safer Approach

Continuity planning can stay lightweight: define a fallback workflow, confirm practical export steps, and document who can execute them. For critical operations, some teams periodically test a small “exit drill” in a non-production setting to validate assumptions without disruption.

Quick Risk Map Table

This table is not a scorecard. It is a way to spot where operational risk concentrates, so selection discussions can focus on the right trade-offs.

| Mistake | What It Usually Breaks | Early Signal | Safer Angle |

|---|---|---|---|

| Price/features first | Support, uptime, day-to-day flow | Ops not in evaluation | Score operational fit |

| No real pilot | Go-live stability | Edge cases ignored | Time-boxed critical workflow test |

| Integration as checkbox | Data integrity, reliability | No monitoring plan | Integration risk map + owners |

| Weak reference checks | Incident handling reality | Only “happy” references | Ask about specific incidents |

| Assumed support scale | Resolution time under pressure | Vague escalation | Define response expectations |

| Unclear decision rights | Change speed, incident clarity | Ownership shifts | Lightweight governance |

| Policy/contract blind spots | Change constraints, data access | No practical export story | Translate into ops checklist |

| Adoption not operationalized | Throughput, error rate | “Live” equals “done” | Rollout + support window |

| No exit/continuity plan | Resilience, reversibility | No fallback workflow | Confirm exports + fallback steps |

Risk Patterns That Repeat Across Vendors

Pattern: Hidden Dependencies

Disruption risk rises when a vendor becomes a single point in workflows, and the organization cannot route around it. The signals are usually quiet: increasing exceptions, more manual steps, and growing reliance on one person who “knows how it works.”

Pattern: Ambiguous Ownership

When roles are unclear, issues bounce between teams and the vendor. That creates latency and erodes trust. Clear ownership does not guarantee no incidents; it usually reduces the duration and the collateral damage.

Pattern: Unpriced Operational Work

Operational costs often appear as internal time: monitoring, troubleshooting, training, reconciliation. If that work is not surfaced during selection, the vendor looks “efficient” on paper but expensive in practice. The mismatch becomes visible through burnout and recurring small failures.

Pattern: Irreversible Commitments

Lock-in is not always technical. It can be process lock-in: workflows that only work inside one vendor’s tool. When reversibility is low, teams hesitate to change even when problems are visible, increasing exposure to prolonged disruption.

FAQ

What is the earliest sign that a vendor choice will disrupt operations?

A common early sign is rising workarounds: people start creating manual steps or parallel tracking because the vendor system does not fit daily flow. Another is vague ownership: questions about who fixes what do not have a clear, repeatable answer.

How can a small team evaluate operational fit without a long procurement process?

Small teams often learn the most from a short pilot around one critical workflow, plus a focused review of support expectations and integration touchpoints. The key is to test a few edge cases that already cause friction in current operations.

Is it normal for vendor onboarding to reduce productivity at first?

Yes, a temporary dip can be normal because routines change and new failure modes appear. The operational question is whether the dip is bounded (planned training, clear workflows, fast support) or open-ended (unclear processes, recurring errors, no ownership).

What makes vendor support “good enough” for operationally critical work?

“Good enough” usually means predictable response and escalation behavior under pressure. For critical paths, it often includes clear triggers for urgent handling, a defined escalation route, and a shared understanding of what counts as an incident.

How should exit planning be handled without assuming the vendor will fail?

Exit planning can be treated as reversibility hygiene: confirm data export steps, document a fallback workflow, and clarify who can execute them. This supports continuity even if the vendor performs well, because needs and priorities can still change.